Post-Merger Global Marketing Automation and Web Consolidation

Standardizing AEM + Pardot + Salesforce across 8 regions

Fragmentation was the baseline state. Not by design, just by accumulation. Two legacy organizations had been merged, but nobody had merged the marketing infrastructure. Every region had built its own workarounds — different templates, different consent implementations, different scoring models, different everything. The technical debt was real, but the organizational debt was worse: eight regional teams, each convinced their local approach was the right one.

- Multiple web properties with no shared CMS workflow

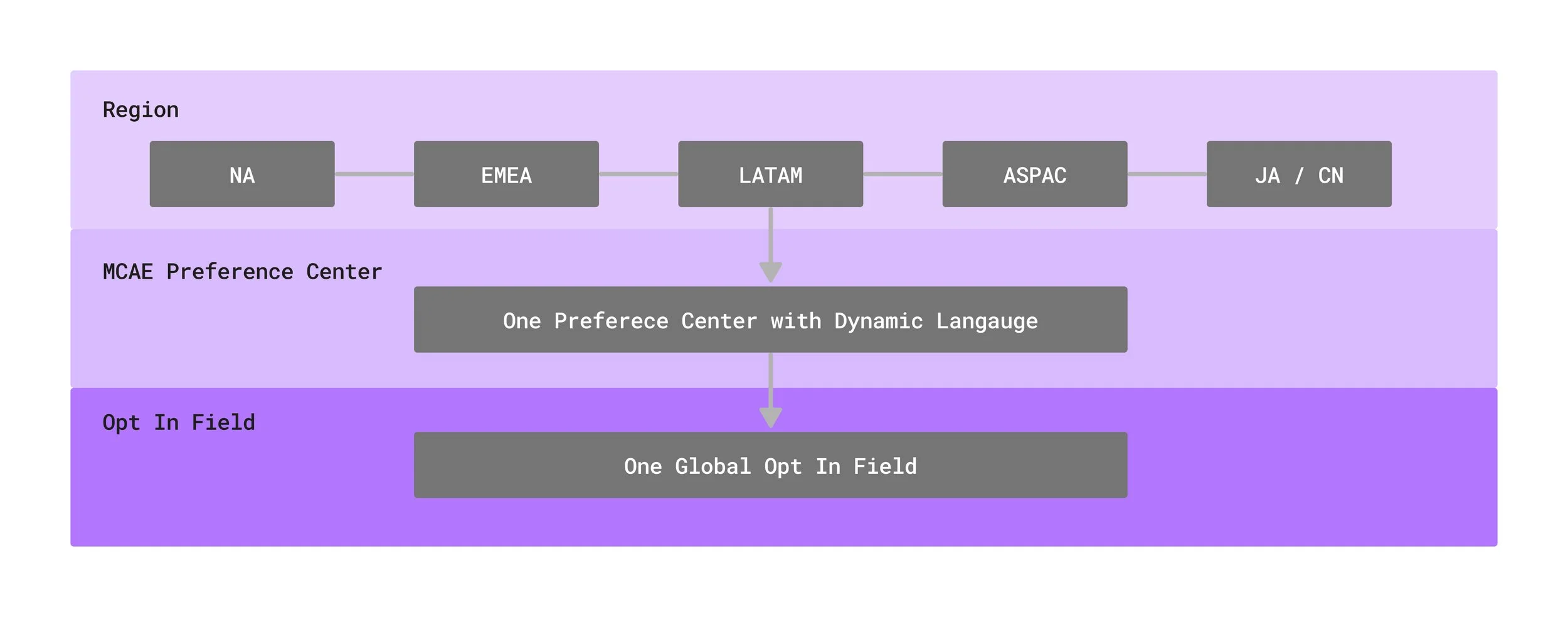

- Region-specific consent language and opt-in implementations with no consistency

- Three or more scoring variations across regions, no shared MQL definition

- Manual CSV uploads, manual campaign enrollment, manual everything

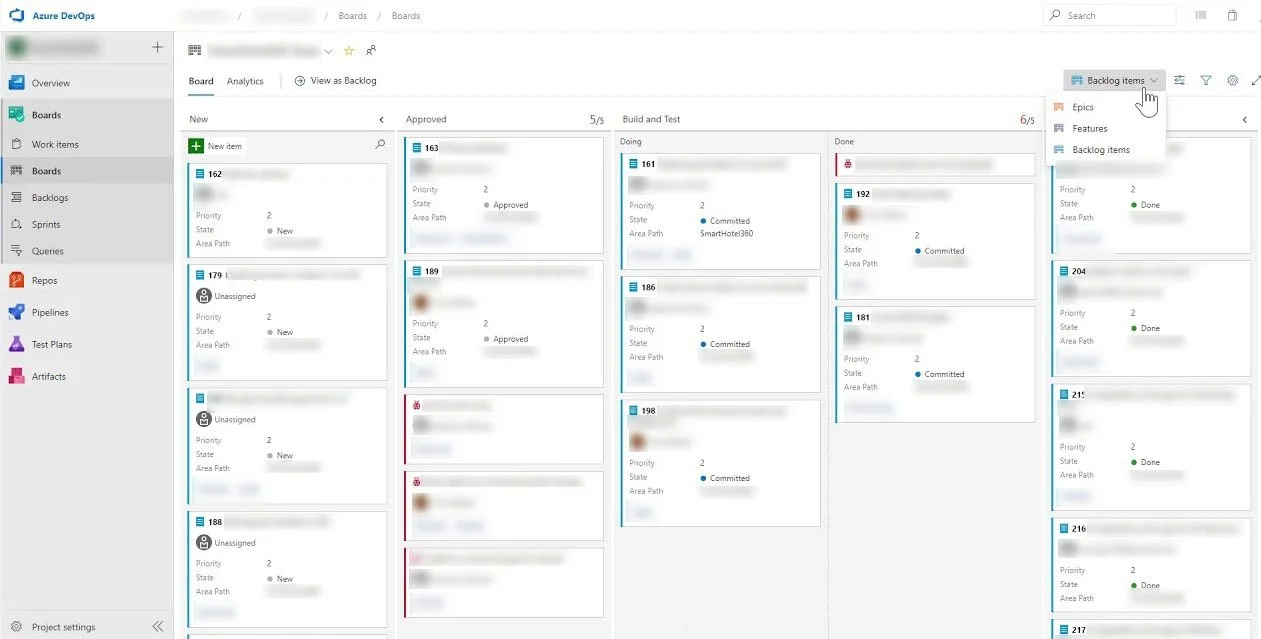

- QA was informal. Release risk was higher than it should have been.

- Reporting was hard to trust because the underlying data was not clean.

Sales did not trust the leads. Compliance exposure was real across EU and APAC. Campaign launches took weeks. Every region had its own workaround.

Getting eight regional teams to adopt a single global model was the hardest part of this project. Every region had a legitimate reason their market was different. The approach was not to dismiss those differences but to build a system flexible enough to handle regional compliance requirements within a shared architecture — and then prove it worked in NA before asking anyone else to adopt it.

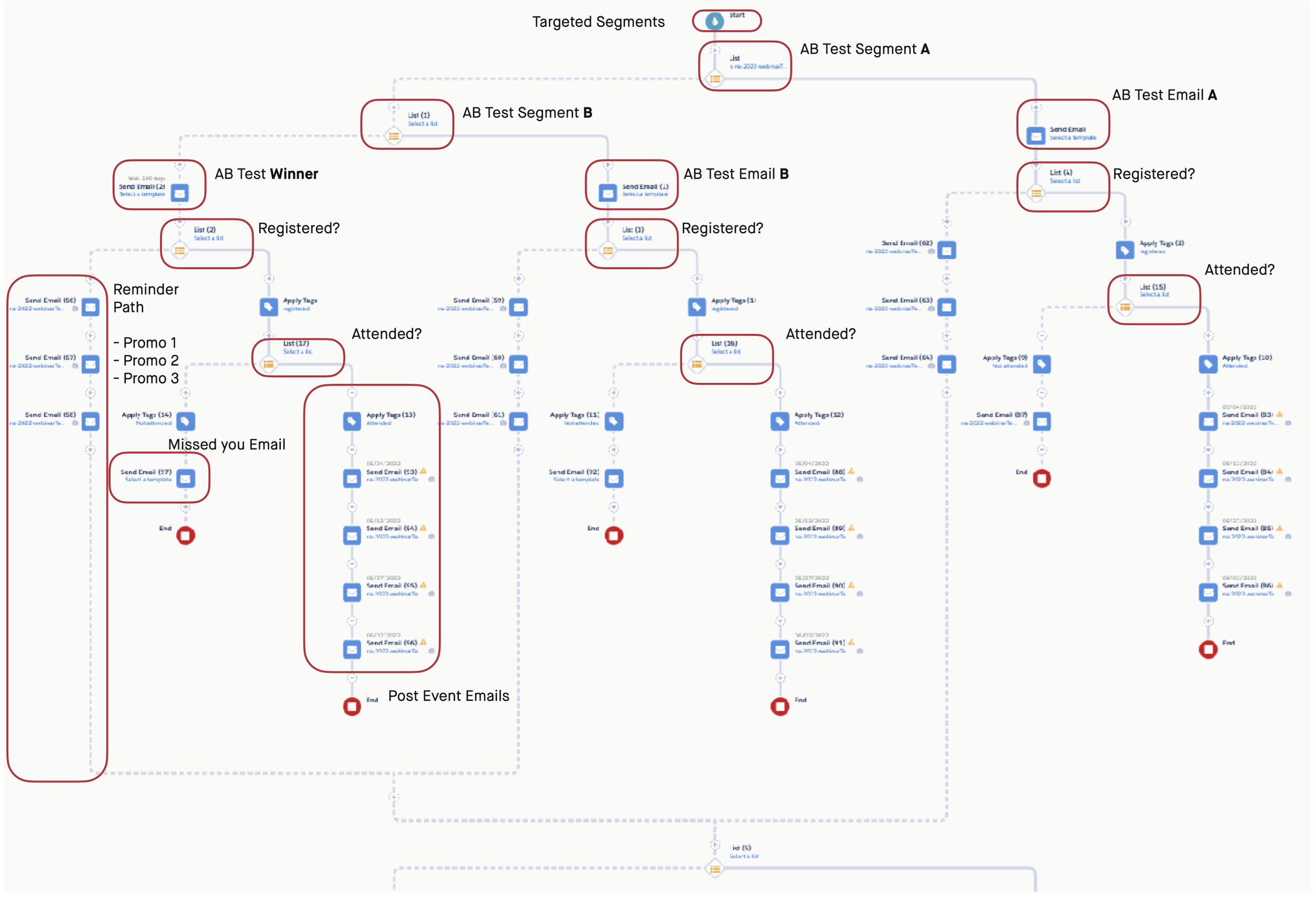

The rollout followed a deliberate wave structure. NA went first as the pilot — not because it was easiest, but because it was the most controlled environment to validate the architecture before asking other regions to adopt it.

Each subsequent wave — EMEA, LATAM, APAC, Japan, China — had a documented go-live checklist, cross-time-zone handoff protocols, and entry criteria that had to be met before launch. Regional teams were involved in UAT for their wave, which built ownership and surfaced locale-specific issues early.

This was a twelve-month program with regular late-night calls across time zones. The coordination overhead was real. What made it work was that every wave benefited from the documented learnings of the previous one — the playbook got better with each region.

| Metric | Result |

|---|---|

| Campaign deployment time | Reduced 62% (3–4 weeks → 4–7 days) |

| MQL to SQL conversion | Increased 17% |

| CRM-marketing field alignment | 72% → 96% |

| Duplicate lead records | Reduced 38% |

| Post-launch defects | Reduced 33% |

| Rollback incidents | Reduced 40% |

| On-time regional launches | 95% |

| Regions on unified architecture | 8 |

Email engagement improved as a downstream effect of better targeting, cleaner consent data, and template consistency. Not a creative story — a data and architecture story.

| Metric | Before | After |

|---|---|---|

| Email Open Rate | 19.5% | 23.4% |

| CTR | 2.6% | 4.5% |

| Unsubscribe Rate | 1.9% | 0.5% |

| List Growth | -5.2% | +7.1% |

Consent and suppression logic was standardized across all 8 regions. Lead scoring had a single shared MQL definition calibrated with sales input. Campaign launches that previously required weeks of regional coordination could be executed in under a week. Sales started trusting the leads because the data was clean and the routing was reliable.

The system is stable and adopted, but it's not done. The next maturity curve is self-service: giving regional marketers a reporting layer so they can validate their own data without routing through central ops. Progressive profiling in the preference center would improve list quality over time without adding form friction. Lead scoring needs a formal quarterly recalibration cycle tied to actual sales outcomes — set-it-and-forget-it doesn't work. And QA governance should become a shared playbook that regional teams can own, reducing the dependency on central coordination for standard launches.

This project shows what it takes to consolidate marketing infrastructure at enterprise scale after an acquisition — not just technically, but organizationally. I designed a global architecture that was flexible enough to handle eight regions' compliance and market requirements within a single system. I built the operating model for QA, release governance, and cross-regional rollout. I got eight teams who were comfortable with their local workarounds to adopt a unified platform — not by mandating it, but by proving it worked in the pilot and documenting everything well enough that adoption was the obvious choice. The result was a system where campaign execution, data quality, consent management, and lead routing all work together — and where regional teams can operate within it independently.