SDLC & RCA Modernization

Marketing & Guest Services Applications — Vail Resorts

The team was not failing for lack of talent. It was failing for lack of structure.

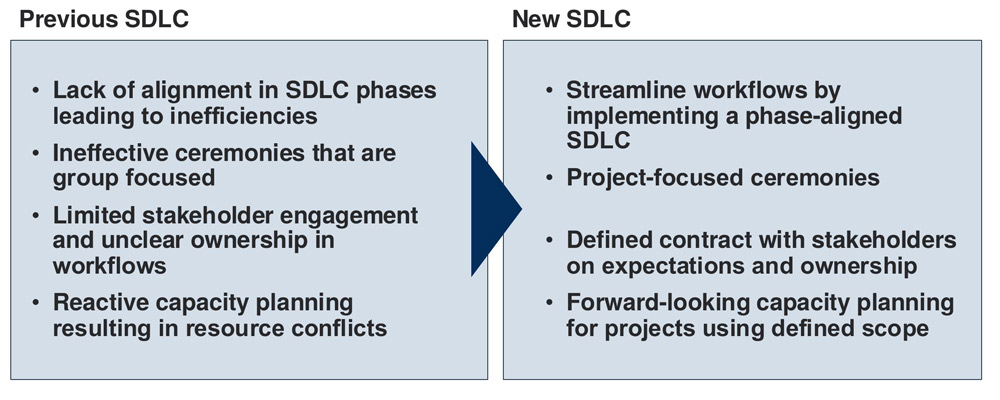

SDLC phases weren't aligned to actual work intake. Requirements arrived incomplete and changed mid-sprint without consequence. There were no gated entry or exit criteria — work moved from "somebody mentioned it" to "somebody is coding it" without deliberate planning.

All ceremonies were group-focused: standups covered every project in a single session, demos were aggregated, retrospectives were generic. This hid accountability. No single stakeholder could see the health of their project.

Stakeholders submitted requests informally — Slack, email, hallway conversations. There was no intake process, no acknowledgment SLA, and no clear owner for validating requirements or communicating status. The result was constant re-prioritization and a team that felt whipsawed by shifting demands.

Jira was not a system of record. Statuses were unreliable, dependencies weren't linked, and planning decisions were made from memory. QA was pulled in late — often after code was already in staging — with no formalized reporting or feedback loop. RCA existed in name only: incidents triggered a meeting, someone took notes, and the team moved on. Repeat incidents recurred because root causes were never systematically addressed.

This wasn't a top-down mandate. The team had been operating this way for years and was comfortable with it. Getting buy-in required showing — not telling — why the current approach was failing. The documentation package wasn't just a process reference; it was a persuasion tool. Every artifact was designed to answer the implicit question: "Why should I change how I work?" The compressed feedback cycle gave the team genuine voice in the process while maintaining momentum toward a firm go-live date.

The operating model rests on four pillars. Each addresses a specific failure mode; together they form one integrated system.

Phase-Gated SDLC

Gated Initiation and Planning phases with entry/exit criteria before sprint execution begins. Front-load clarity; eliminate mid-sprint surprises.

Jira as System of Record

Standardized ticket statuses, ownership rules, linking semantics, daily hygiene. Planning from data, not memory.

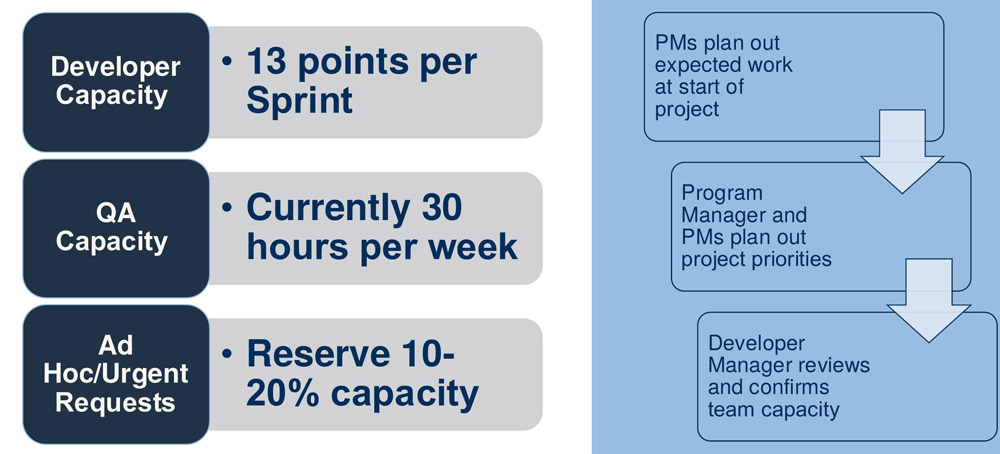

Predictable Capacity Management

13 dev points/sprint, QA hours tracked, 10–20% buffer reserved for urgent work. Over-commitment eliminated.

Institutional RCA

Severity-based triage, structured methods (5 Whys + Ishikawa), facilitator's handbook, action tracking, metrics. Blame-free, systems-focused.

Every process document, SOP, workflow, and deck listed here was designed and authored by me. The full package was circulated Dec 6, feedback-integrated by Dec 20, and adopted Jan 1.

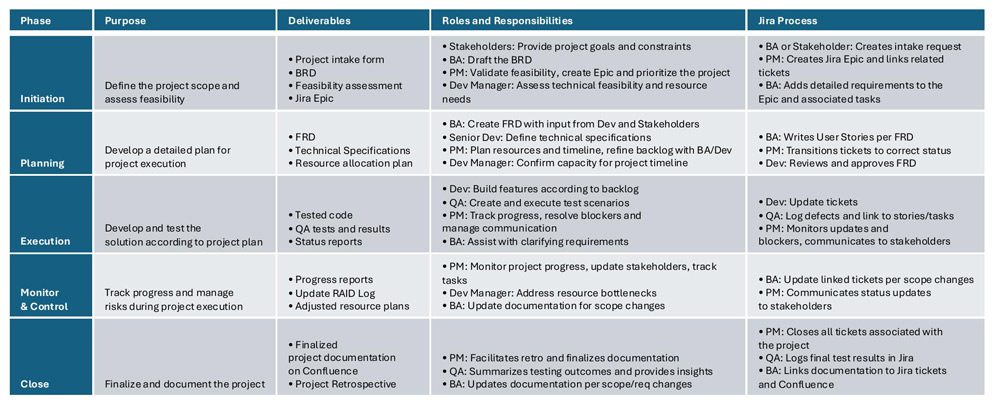

SDLC Phases & Governance

Each phase has a defined purpose, required deliverables, responsible roles, and Jira governance.

Artifacts

- SDLC Process Document — 5 phases with purpose, deliverables, roles, and Jira governance per phase

- SDLC Workflows SOP — lifecycle, backlog refinement, and deployment workflows (incl. Go/No-Go)

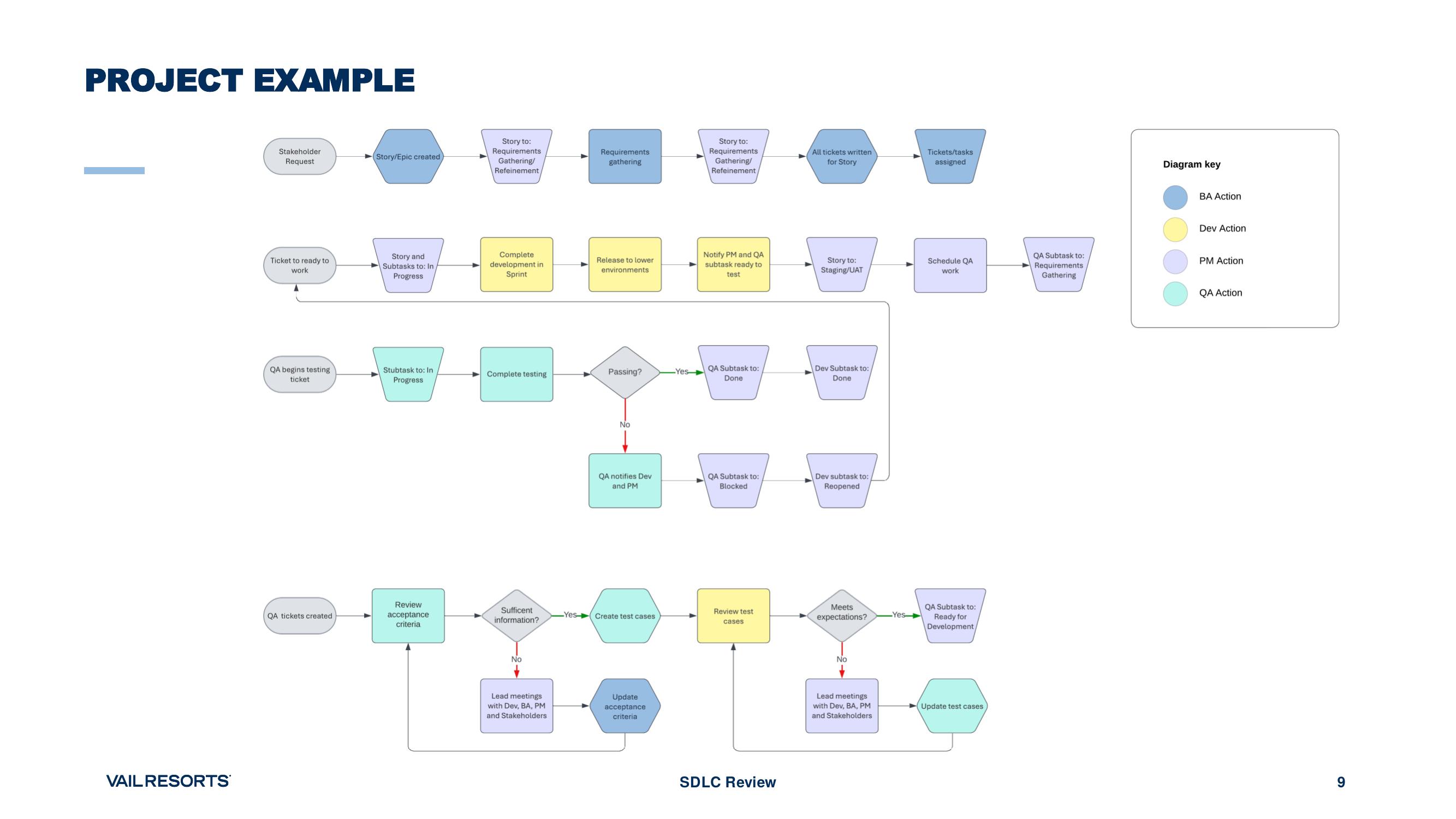

Project Workflow

Jira Governance

- Standardized ticket lifecycle: Nine defined statuses (Upcoming → Requirements Gathering/Refinement → Ready for Development → In Progress → Staging/UAT → Blocked → Done → Ready for Production → Won't Do) with clear ownership at each stage.

- Linking discipline: Standardized dependency vocabulary and linking semantics. Bugs linked to parent tickets. Cross-project dependencies visible.

- Daily hygiene: Status and time-tracking updates expected daily. Jira reflects reality, not aspiration.

Artifacts

- Jira Ticket Management SOP — 9 standardized statuses with role-based ownership

- Jira Definitions PDF — linking semantics and dependency vocabulary

- Application Intake Process SOP — intake form → BA validation → Jira creation → backlog routing

- Stakeholder Guide — how to submit, track, and expect updates on requests

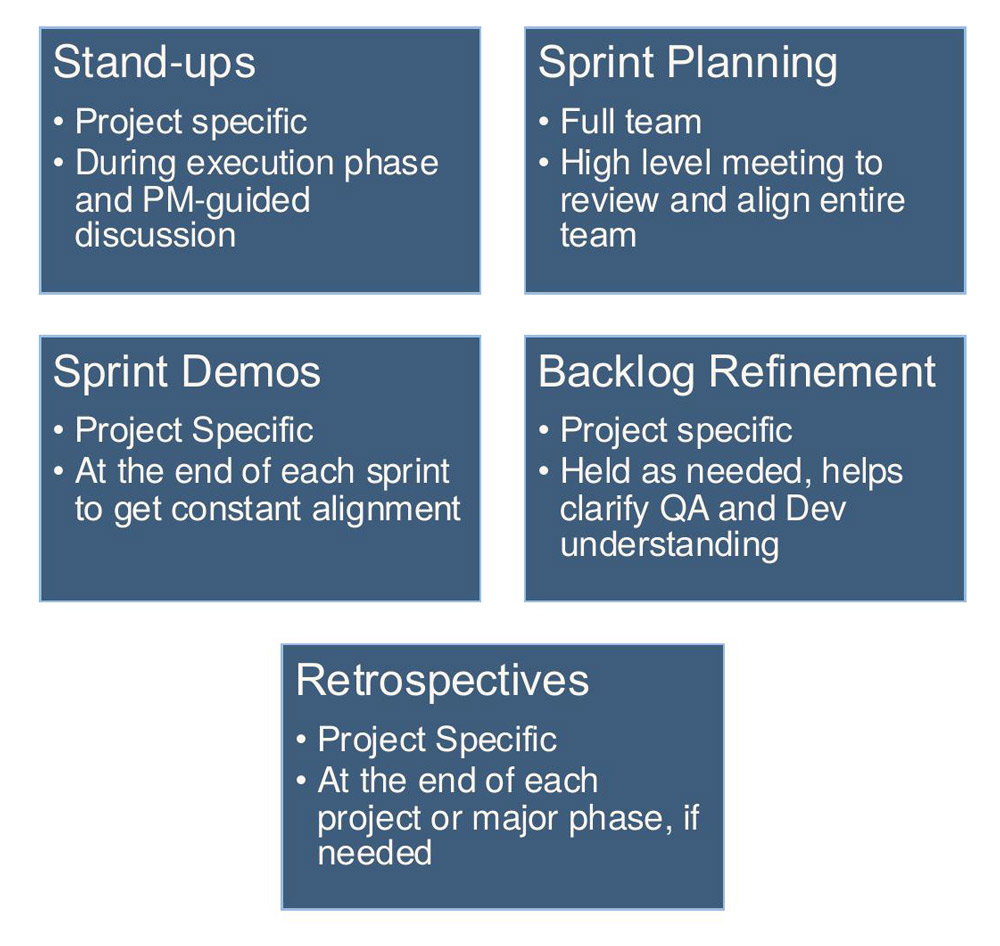

Project-Focused Ceremonies

Group-focused ceremonies hid accountability. All ceremonies were restructured to be project-specific: standups (PM-guided, execution phase), demos (end-of-sprint, stakeholder-facing), refinement (as-needed), retrospectives (project close). Sprint planning remained full-team for capacity coordination.

Capacity Management

Sprint capacity is calculated from Jira data: story points for developers, estimated hours for QA. A 10–20% buffer is reserved for urgent and ad hoc requests. When the buffer goes unused, it's reclaimed for backlog items.

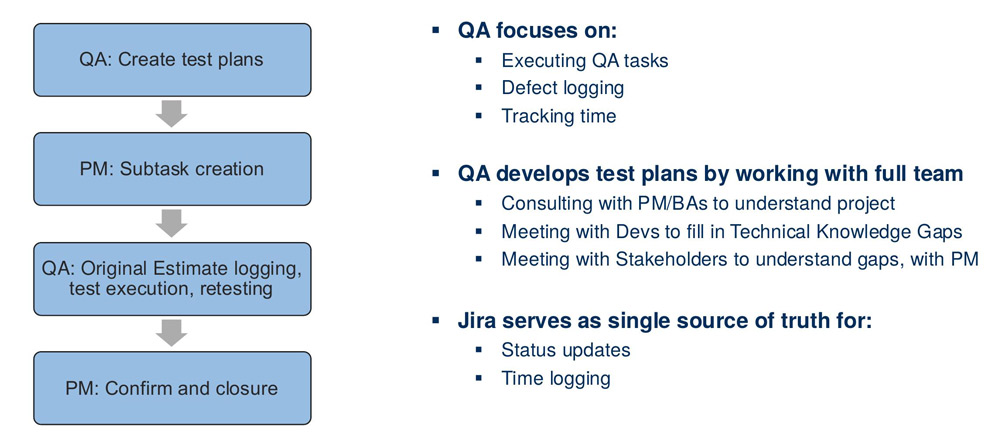

QA Operating Model

QA moved from an afterthought to a governed function. QA engages during Planning, not after code is in staging. Test scenarios are created alongside acceptance criteria. Weekly QA summary reports and bi-weekly feedback meetings create structured improvement loops. Recurring QA issues trigger the RCA process.

Artifacts

- QA Process + QA SOP — planning/execution/reporting loops, weekly summaries, bi-weekly feedback

- Capacity Management Process — sprint-level planning with reserved buffer and reclamation rules

RCA Program

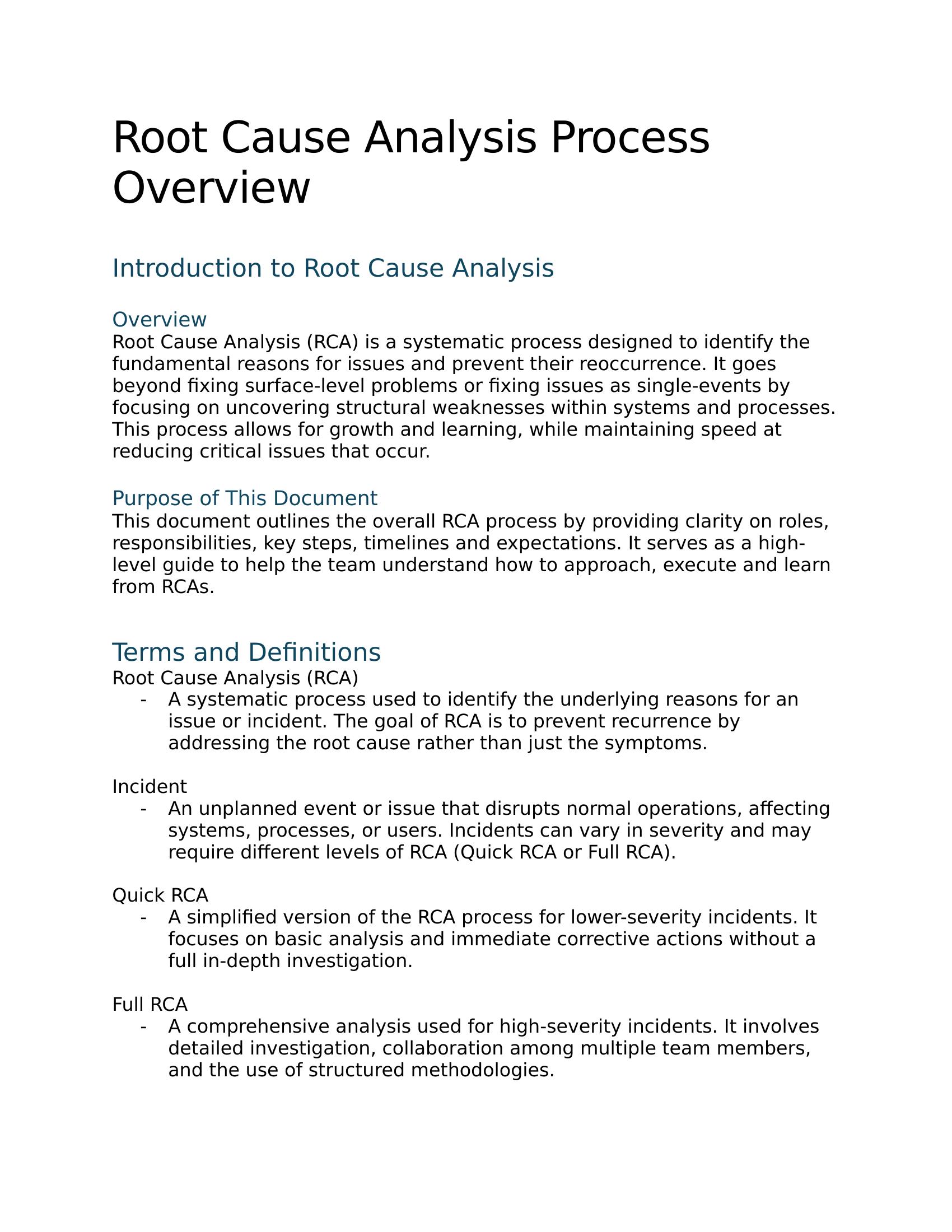

The RCA program has five steps: (1) Detection & Logging, (2) Triage — severity mapped to Quick RCA (30–45 min) or Full RCA (45–60 min), (3) Investigation using 5 Whys and Ishikawa, (4) Action Planning with owners and deadlines, (5) Follow-up & Metrics. Cultural frame: blame-free, systems-focused, compassionate.

Artifacts

- RCA Process Overview — quick vs. full RCA, 5-step lifecycle, culture framing

- Incident Triage Guide — severity-to-depth mapping

- Facilitator's Handbook — 5 Whys + Ishikawa playbooks, action plan governance

Supporting Decks

- SDLC Review Deck (12/6) — challenges, phase overview, ceremonies, capacity, QA, project workflow, rollout dates

- Story Pointing Deck (12/11) — INVEST, estimation discipline, mock scenarios for scope creep and dependencies

Adoption was not assumed. It was engineered.

| Date | Milestone |

|---|---|

| Dec 6 | Full documentation package + SDLC Review deck circulated |

| Dec 9–13 | Team feedback window (engineers, QA, BA/PM) |

| Dec 16–20 | Feedback integrated; leadership formally endorsed the model (Dec 20) |

| Dec 30 | Final team walk-through |

| Jan 1 | New operating model live |

The rollout was deliberately compressed. A long change window invites drift. Four weeks — draft, feedback, integrate, approve, go-live — gave the team the full arc of proposal → participation → commitment without losing momentum.

| Metric | Improvement |

|---|---|

| Requirement clarification loops | Reduced ~50% |

| Reopened tickets | Reduced ~35–45% |

| Critical post-release defects | Reduced ~25–30% |

| Repeat incident recurrence | Early indicators: −30–50% |

| Stakeholder change requests post-planning | Reduced ~50% |

Jira became the source of truth. BA/PM/Dev/QA ownership was explicit. QA capacity became a planning input. RCA created institutional learning with tracked follow-through.

This project shows what I do: I walk into a team that's struggling with delivery — not because of bad people, but because of missing structure — and I build the operating model that fixes it. I design the governance, write the documentation, align leadership, and drive adoption. The artifacts I create are designed to persist without me. The systems I build connect intake to requirements to delivery to quality to continuous improvement as one coherent whole. I've done this in a product-light environment with distributed stakeholders and no new headcount — just better structure, clearer ownership, and the discipline to follow through.